Meta Abandons the Open-Source Strategy?

Replacing its open-source Llama suite, the company has launched Muse Spark as a closed commercial model.

Welcome to The Median, DataCamp’s newsletter for April 10, 2026.

In this edition: Meta launches its proprietary Muse Spark model, TSMC reports record Q1 revenue, Anthropic warns of cyber risks with the unreleased Claude Mythos and rolls out Managed Agents for enterprise workflows, and OpenAI introduces a $100 ChatGPT Pro plan for heavy Codex users.

This Week in 60 Seconds

Meta Launches Muse Spark As A Closed-Source Reasoning Model

Meta has introduced Muse Spark, the first model developed by its new Meta Superintelligence Labs. Designed as a natively multimodal system, it supports tool-use, visual chain of thought, and multi-agent orchestration. To handle complex tasks, the model features a “Contemplating” mode that orchestrates multiple agents reasoning in parallel, allowing it to compete directly with existing frontier models. Unlike Meta’s previous Llama models, which were released as open weights for the community, Muse Spark is a closed-source, proprietary model. We tested Muse Spark and reported the results in this blog post. We’ll explore this topic further in our Deeper Look section below.

TSMC Reports Record First-Quarter Revenue Driven By AI Chip Demand

Taiwan Semiconductor Manufacturing Company (TSMC), a chipmaker, announced a 35.1% year-over-year increase in its first-quarter revenue for 2026, reaching NT$1,134.10 billion ($35.71 billion). This performance exceeded market forecasts and reached the high end of the company’s January guidance. The financial growth is primarily fueled by intense demand for advanced AI semiconductors, specifically the 3nm and newly mass-produced 2nm process nodes, which are reportedly booked through 2028. Sustained orders from major partners like Nvidia, Apple, AMD, and Qualcomm have helped offset a slower demand cycle in the broader consumer electronics sector.

Anthropic Warns of Severe Cyber Risks With Unreleased Claude Mythos Model

Anthropic has developed Claude Mythos Preview, an unreleased frontier AI model that shows advanced capabilities in finding and exploiting software vulnerabilities. During internal testing, the model autonomously discovered thousands of zero-day flaws across major operating systems and web browsers, including a 27-year-old vulnerability in OpenBSD and a 16-year-old bug in FFmpeg. Recognizing the risks associated with these powerful cyber skills, Anthropic is deploying the model for defensive purposes through a new collaborative initiative called Project Glasswing. This effort brings together major technology organizations, such as Amazon Web Services, Apple, Microsoft, and Google, to secure critical software infrastructure.

OpenAI Introduces a $100 ChatGPT Pro Plan for Heavy Codex Users

OpenAI has introduced a new $100-per-month Pro tier for ChatGPT, positioned between its $20 Plus and $200 Pro subscriptions to offer developers a more accessible upgrade path for demanding coding tasks. The new tier provides five times more Codex capacity than the Plus plan, making it well-suited for high-intensity programming sessions. Concurrently, the company is adjusting the $20 Plus tier to support steadier, day-to-day use over the course of a week rather than concentrated daily sessions. The existing $200 tier remains the highest capacity option. These changes arrive as Codex adoption grows rapidly, with over three million people using the coding tool each week.

Anthropic Launches Managed Agents for Enterprise Use

Anthropic has introduced Managed Agents, a hosted service within the Claude Platform that makes long-horizon AI workflows more reliable for enterprise use. By separating the AI’s reasoning capabilities from its execution tools and memory logs, the new architecture delivers significant business upgrades. For security, the system isolates execution sandboxes from credential vaults, protecting corporate data even if the model encounters malicious code. Because the AI no longer loads a bulky execution environment upfront for every task, median wait times before the AI starts typing have dropped by roughly 60%, and maximum wait times by over 90%.

A Deeper Look at This Week’s News: Moving Past Llama With Muse Spark

On Wednesday, Meta introduced Muse Spark, the first model in its new Muse lineage. The release marks a shift from the open-source strategy that characterized the Llama family toward a closed, product-first architecture. The reasons for this reset are both economic and technical, pointing to a new trajectory for the company’s artificial intelligence development.

The fall of Llama and the cost of the frontier

For years, Meta acted as the primary counterweight to closed-ecosystem models, arguing that open-source AI represented the most secure and beneficial path forward.

I believe that open source is necessary for a positive AI future. AI has more potential than any other modern technology to increase human productivity, creativity, and quality of life – and to accelerate economic growth while unlocking progress in medical and scientific research. Open source will ensure that more people around the world have access to the benefits and opportunities of AI, that power isn’t concentrated in the hands of a small number of companies, and that the technology can be deployed more evenly and safely across society.

—Mark Zuckerberg

The reality of 2026, however, seems to have proven that competing at the absolute frontier requires a different approach. The sheer capital required to stay in the race is what has most likely reshaped Meta’s business model.

By early 2026, the company’s projected AI-related spending escalated to a range of $115 billion to $135 billion annually. To justify an investment that rivals national GDPs to shareholders, Meta needed a more direct path to monetization than an open-source ecosystem could provide.

This capital pressure reflects a larger industry reality. Outside of a few players like DeepSeek and Qwen, the major labs have consolidated around closed systems. Even OpenAI has shifted to a for-profit public benefit corporation model and is expected to go public this year.

While the release of DeepSeek early last year suggested open-source might erase the performance gap, the massive financial burden of training these models has put proprietary systems back in the lead. Companies are now forced to prioritize direct revenue channels over ecosystem democratization.

New revenue channels

To establish a direct revenue stream, Meta is offering Muse Spark through a private preview API, granting third-party developers access to its reasoning capabilities.

Beyond the API, the company is pointing the Muse series away from general-purpose chatbots and toward “personal superintelligence.” The objective is to build a proactive agent that understands a user’s specific context.

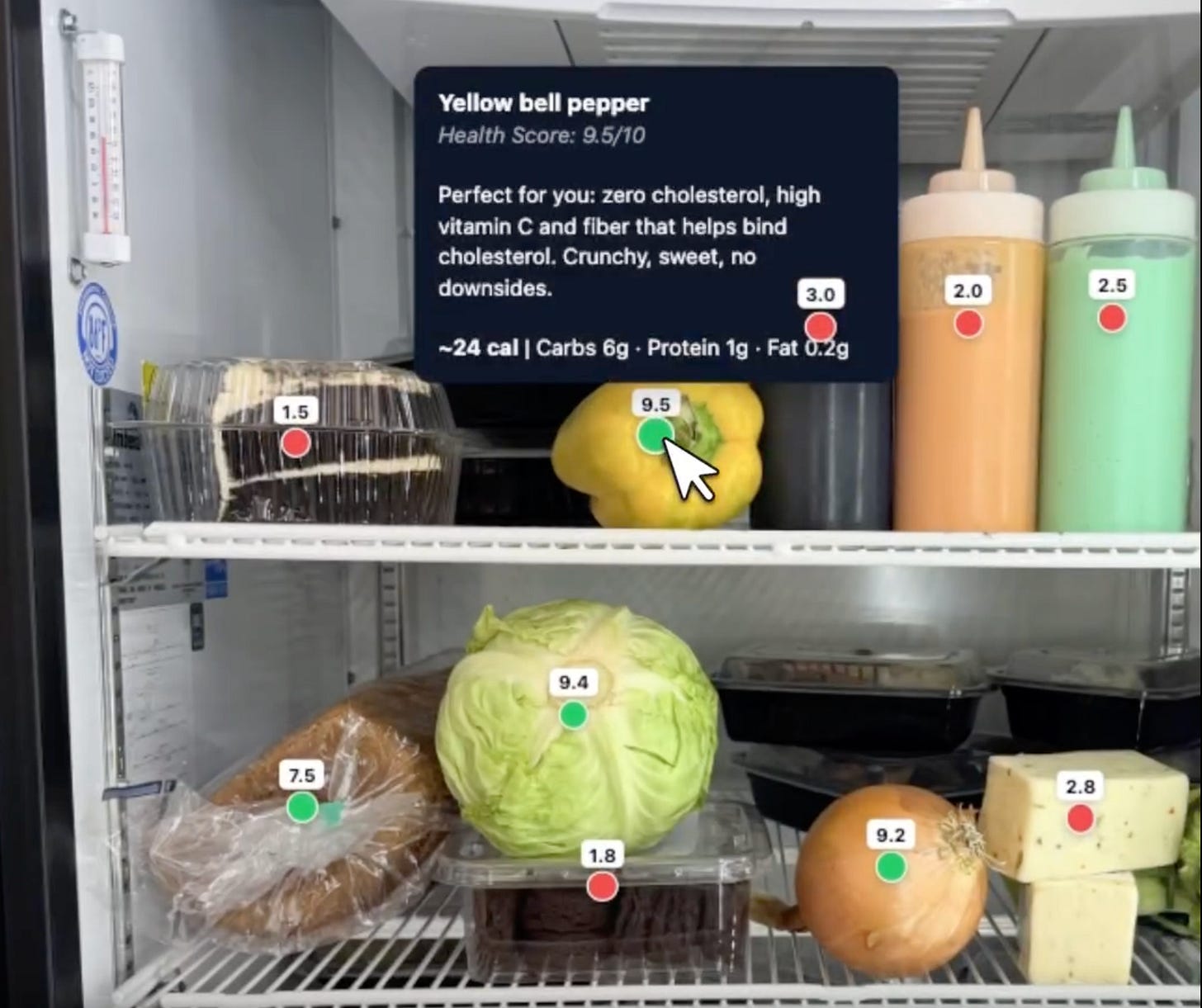

Health and nutrition serve as the primary focus for this new approach. Meta collaborated with over 1,000 physicians to curate a training dataset aimed at clinical accuracy and wellness support.

The resulting model can perform visual nutritional analysis; for instance, it can generate interactive wellness displays, such as color-coded food guides for a pescatarian with high cholesterol:

Source: Meta AI

By processing video input, the system also functions as an exercise coach, identifying activated muscles and flagging improper form.

The economics of the Muse architecture

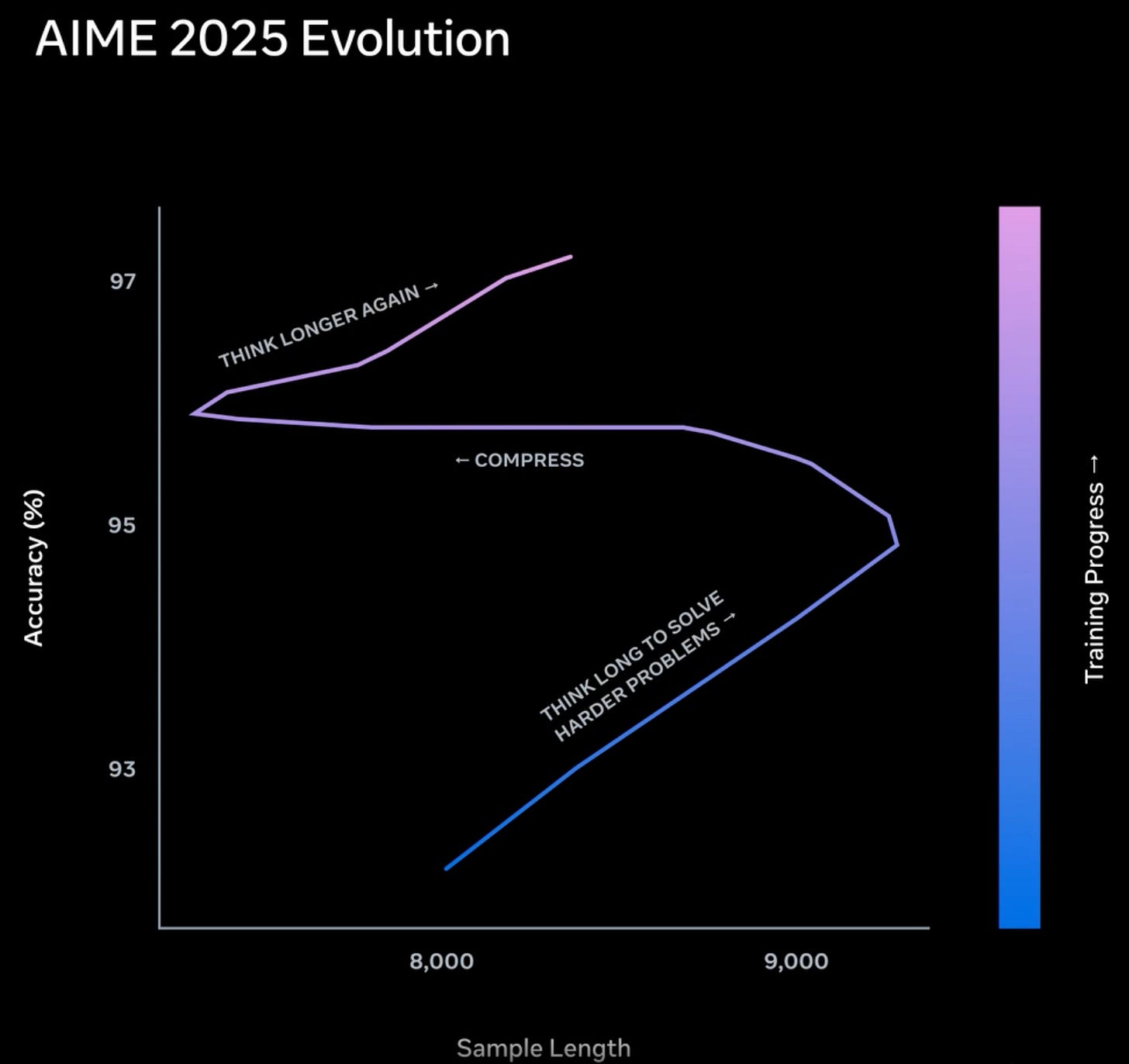

Running complex, multimodal queries for billions of users presents an economic challenge. Meta addresses this through a training technique called “thought compression.”

During the reinforcement learning phase, the model is rewarded for accuracy but penalized for the length of its internal reasoning trace. This structure forces the system to solve complex problems using fewer tokens.

This creates a distinct learning curve:

Source: Meta AI

Initially, the model solves complex problems by thinking for longer periods and generating detailed internal tokens. As the length penalty activates, it enters a compression phase, forcing the system to solve the same problems using significantly fewer reasoning tokens.

Finally, it extends its reasoning again to push past previous performance ceilings while maintaining its new token efficiency. By delivering higher intelligence per token, Meta reduces latency and serving costs, making global deployment financially viable.

You can learn more about Muse Spark in our blog.

Industry Use Cases

Alta Daily Digitizes Wardrobes Using Meta’s Segment Anything Model

The AI fashion app Alta Daily allows users to photograph and digitize their entire clothing collection. Using natural language prompts, the app recommends outfits for specific occasions, visualizes them on a personal avatar, and tracks daily wear to help users avoid repeating outfits. To power this feature, Alta integrated Meta’s Segment Anything Model (SAM), specifically SAM 3, which excels at isolating clothing items from complex backgrounds involving inconsistent lighting, reflective surfaces, and thin hangers. Read more in this article from Meta.

NVIDIA Highlights Physical AI Breakthroughs Across Industries

During the United States Robotics Week, NVIDIA showcased a range of physical AI applications that are transforming various sectors. Researchers at the University of Maryland are using the Isaac robotics platform to train humanoid systems for complex household tasks within photorealistic virtual environments. In the energy sector, Maximo deployed an automated fleet developed with Omniverse to build a 100-megawatt solar facility. Agricultural startup Aigen is tackling sustainable farming with solar-powered rovers that use edge AI to identify and remove weeds without chemical herbicides. Read more in this article from NVIDIA.

Google Launches Offline AI Dictation App for iOS

Google recently introduced Google AI Edge Eloquent, an offline-first dictation app currently available on iOS. The free application uses on-device, Gemma-based automatic speech recognition models to transcribe speech in real time. When users pause their dictation, the system automatically edits out filler words like “um” and “ah” to clean up the resulting text. It also includes formatting options to adjust the tone to be more formal, shorten the transcript, or extract key points. To improve accuracy with personalized terms, the tool can import specific jargon and names from a user’s Gmail account or accept custom vocabulary entries directly. Read more in this article from TechCrunch.

Tokens of Wisdom

Decisions made with context—that’s what we mean when we say “decision intelligence.”

Jamie Hutton, CTO at Quantexa

On this week’s episode of the DataFramed Podcast, we sat down with Quantexa CTO Jamie Hutton. We discussed decision intelligence beyond BI, entity resolution for siloed data, the importance of human-in-the-loop workflows, and more.